Completely un-scientific benchmarks of some embedded databases with Python

I've spent some time over the past couple weeks playing with the embedded NoSQL databases Vedis and UnQLite. Vedis, as its name might indicate, is an embedded data-structure database modeled after Redis. UnQLite is a JSON document store (like MongoDB, I guess??). Beneath the higher-level APIs, both Vedis and UnQLite are key/value stores, which puts them in the same category as BerkeleyDB, KyotoCabinet and LevelDB. The Python standard library also includes some dbm-style databases, including gdbm.

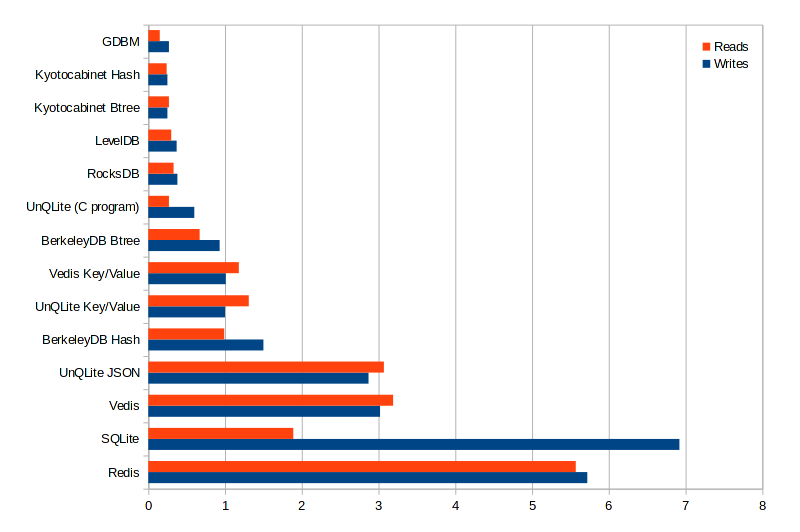

For fun, I thought I would put together a completely un-scientific benchmark showing the relative speeds of these various databases for storing and retrieving simple keys and values.

Here are the databases and drivers that I used for the test:

- UnQLite: unqlite-python (ctypes)

- Vedis: vedis-python (ctypes)

- GDBM: standard library (C)

- BerkeleyDB (b-tree and hash-table): bsddb3 (C)

- KyotoCabinet (b-tree and hash-table): kyotocabinet (C++)

- LevelDB: plyvel (Cython)

- RocksDB: pyrocksdb (Cython)

- SQLite: standard library (C and Python)

- Redis: redis-py (Python) -- this one is just for fun!

I'm running these tests with:

- Linux 3.14.4

- Python 2.7.7 (Py2K 4 lyfe!)

- SSD

For the test, I simply recorded the time it took to store 100K simple key/value pairs (no collisions). Then I recorded the time it took to read back all these values. The results are in seconds elapsed:

Interpreting the results

The standard library DBM implementation outstripped the competition, which I believe is mostly due to the comparative simplicity of the library. Kyotocabinet and LevelDB were also quite impressive, even more so given the rich feature-set of these two libraries. It was pointed out to me by a commenter on Reddit that GDBM, Kyotocabinet and LevelDB do not allow concurrent access to the database.

UnQLite and Vedis (key/value APIs) performed almost exactly the same, but the high-level UnQLite and Vedis APIs were significantly slower. I attribute the similarity of the first two to the fact that they share a lot of the same architecture and implementation for the key/value storage layer on down. The high-level Vedis APIs involve a deeper python call-stack as well as a tokenizing step, the creation of an execution context, the creation of a value object to store the result, etc, so I'm not too surprised it was quite a bit slower. The high-level UnQLite collection APIs are similarly complex, requiring that all Python values be converted beforehand to UnQLite array data-structures (the reverse being true when reading data from the collection). UnQLite collections are also accessed by creating a Jx9 virtual machine and executing a Jx9 script.

I profiled the UnQLite test just to see if I could get an idea how much overhead ctypes was adding, but it doesn't look too bad. Mostly it seemed to consist of a lot of calls to isinstance and create_string_buffer. I think this is the major contributing factor to why reads from UnQLite and Vedis were slower than writes, since reading requires creation of a ctypes string buffer on the Python side.

For fun I wrote a little C program to try and get a good baseline measure for UnQLite. The reads were two times faster than the writes, whereas in the Python tests the reads took slightly longer -- this seems to me to validate my hypothesis about create_string_buffer(). Comparing the C and Python versions, the C code performed the reads about 4x faster than the Python implementation, and the writes were less than 2x faster.

Overall I was pleasantly surprised to see Vedis and UnQLite do as well as they did.

Benchmark code

If you'd like to try the tests out yourself, you can find the benchmarking code in this gist:

https://gist.github.com/coleifer/3057f97a7628d44c2e59

You will need to install the following python libraries:

unqlitevedisbsddb3plyvelpyrocksdbrediskyotocabinet, source link

Thanks!

Thanks for taking the time to read this post, I hope you found it interesting. As always, if you have any questions or comments, please feel free to leave a comment below.

Links

- unqlite-python on GitHub

- vedis-python on GitHub

- Kyotocabinet python API

- Building the Python SQLite driver for use with BerkeleyDB

- Introduction to the fast, new UnQLite bindings

- Alternative Redis-like databases with Python

Comments (5)

Itamar Haber | jun 30 2014, at 09:20am

Un-scientific but still lots of fun - thanks for sharing! However, is it really surprising that a native library outperforms an embedded db, which outperforms a standalone process? ;)

Matthew Story | jun 30 2014, at 09:18am

Would also be nice to see cdb in the comparison list here:

http://cr.yp.to/cdb.html

Charlie | jun 29 2014, at 03:10pm

Thanks for the suggestion, I've gone ahead and added sqlite to the benchmark script.

Oren Tirosh | jun 29 2014, at 02:42pm

For reference, it would be nice to have SQLite in your benchmark, too. Schema would be a simple two column (key, value) table, indexed by key.

Commenting has been closed.

Charlie | jun 30 2014, at 09:31am

Glad you enjoyed it, Itamar. I was surprised by two things:

ctypes, whereas some of the others are compiled.